AI-Built Internal Tool Governance

The living registry and review process for AI-built internal tools.

AI made internal tools cheap to build. Ownership, review, logging, and retirement did not. Lamdis starts with a scoped Review to baseline what your teams have shipped, then keeps the registry current as agents, builders, and teams create new tools, connect systems, and change workflows.

Baseline what exists. Keep it current. Give leadership a defensible answer.

Why this is showing up now

AI made internal tools cheap. Governance didn't.

Slack bots, Retool apps, scripts, admin dashboards, support helpers, data workflows, AI agents, spreadsheet automations, MCP-connected tools, customer summarizers, reporting pipelines, refund and dispute workflows.

Useful tools become operational infrastructure without anyone deciding. The risk is not that AI was used to write code. The risk is that a useful tool becomes load-bearing without clear ownership, review, logging, access control, maintenance expectations, or retirement criteria.

This isn't about who's using ChatGPT. It's the Slack bot your Sales Ops manager built last Tuesday with Cursor that now decides $40k refund cases — and that nobody put on a service catalog.

Existing AppSec, SDLC, and governance processes were not designed for tools built in an afternoon by a business team. That is the gap.

The Lamdis Review

A scoped engagement, sized to your environment.

We combine structured discovery, technical integrations, and expert review to find what your teams are actually relying on, tier the risk, and leave your team with a working operating model.

The Review creates the baseline. The Lamdis platform keeps it alive.

Structured discovery

We combine interviews, technical integrations, and expert review to find the AI-built tools your teams actually rely on — especially the ones that have quietly become operational infrastructure.

Risk tiered, not bureaucratized

Tier 0 to Tier 4. Heavy review only where it actually matters. No 50-page policy document, no bureaucracy where everything is high risk.

Operating model your team owns

Owners, reviewers, intake paths, escalation rules, remediation backlog. Wired into the Lamdis platform so it keeps working after we leave.

How the Review runs

Five phases. Scoped to your environment.

The depth of each phase scales with the size and complexity of what your teams have built.

Discovery

Interviews with engineering, security, platform, and the business teams using internal tools. Existing inventories, AppSec process, AI usage policies.

Inventory & classification

Build the tool inventory. Classify data exposure, runtime AI use, customer impact, compliance impact, ownership clarity, current review status.

Gap analysis

Identify unowned tools, overprivileged tools, operationally critical tools without fallback, runtime AI without logging, tools outside existing review paths.

Operating model

Risk-tier model, intake form, review decision tree, ownership requirements, escalation rules, prioritized remediation backlog.

Executive readout

Findings, prioritized risks, recommended review process, and a forward plan. The version leadership reads.

What You Get

Deliverables you can act on.

Internal AI Tool Inventory

A structured list of reviewed tools, workflows, scripts, dashboards, agents, and automations. Owner, data touched, criticality, AI involvement, current review status.

Risk Tiering Matrix

Each tool classified Tier 0–4 with a short reason. Refund Review Assistant — Tier 3, customer financial impact. SQL Helper Script — Tier 1, no runtime AI.

Ownership & Risk Gap Report

Prioritized findings leadership can act on. Tools with no owner, customer data without access review, business-critical tools without fallback, duplicate tools across teams.

Review Pathway

A lightweight decision tree. Personal productivity? No review. Touches customer data? Data review. Writes to systems? Engineering review. Affects regulated decisions? Compliance.

Remediation Backlog

Practical, prioritized actions with owners. Assign owner for Refund Review Assistant. Add usage logs for Compliance Summarizer. Retire duplicate spreadsheet automation.

Executive Readout

A short leadership summary. What we found, what matters, what is safe to ignore, what needs action, where current governance works, recommended operating model.

What the Review Covers

Eight focused areas. No 50-page policy document.

Internal tool inventory

Surface the tools, automations, scripts, agents, and dashboards that teams rely on. Owner, builder, users, data touched, business process.

Risk tiering

A practical Tier 0–4 model that tells you what needs serious review and what can move fast. Not a bureaucracy where everything is high risk.

Ownership & accountability

Who built it, who maintains it, who responds when it breaks, who owns the risk if it produces a bad output. The map and the gaps.

Data exposure & system access

What data each tool reads and writes, what production systems it touches, where outputs go, whether secrets are stored safely.

Operational dependency

What stops working if this tool fails. Which tools are more business-critical than leadership realizes. Where fallbacks exist.

Runtime AI behavior

Where AI is used at runtime to summarize, classify, recommend, decide, route, or generate. Where humans review and where they should.

Logging, auditability, evidence

For Tier 2+ tools: can you reconstruct who used it, what input went in, what output came out, and which version was active.

Change management & lifecycle

How changes are made and reviewed. Whether prompts and configs are versioned. Whether anyone is checking for duplicates or retirements.

Risk Tiering

What needs serious review. What can move fast.

A practical model. Heavy review only where it actually matters.

Note summarization, drafting, brainstorming.

None or minimal policy guidance.

Internal Slack bot, spreadsheet automation, meeting summarizer.

Owner, basic data check, basic access review.

Support triage helper, internal admin dashboard, reporting automation.

Owner, logging, access control, failure mode, change process.

Refund recommendation, fraud assistant, payment exception, customer-risk classifier.

Formal intake, AppSec, audit logs, human approval path, monitoring, rollback.

Lending support, claims recommendation, employment screening, compliance enforcement.

Governance, legal/compliance, evidence retention, human oversight, explainability, audit trail.

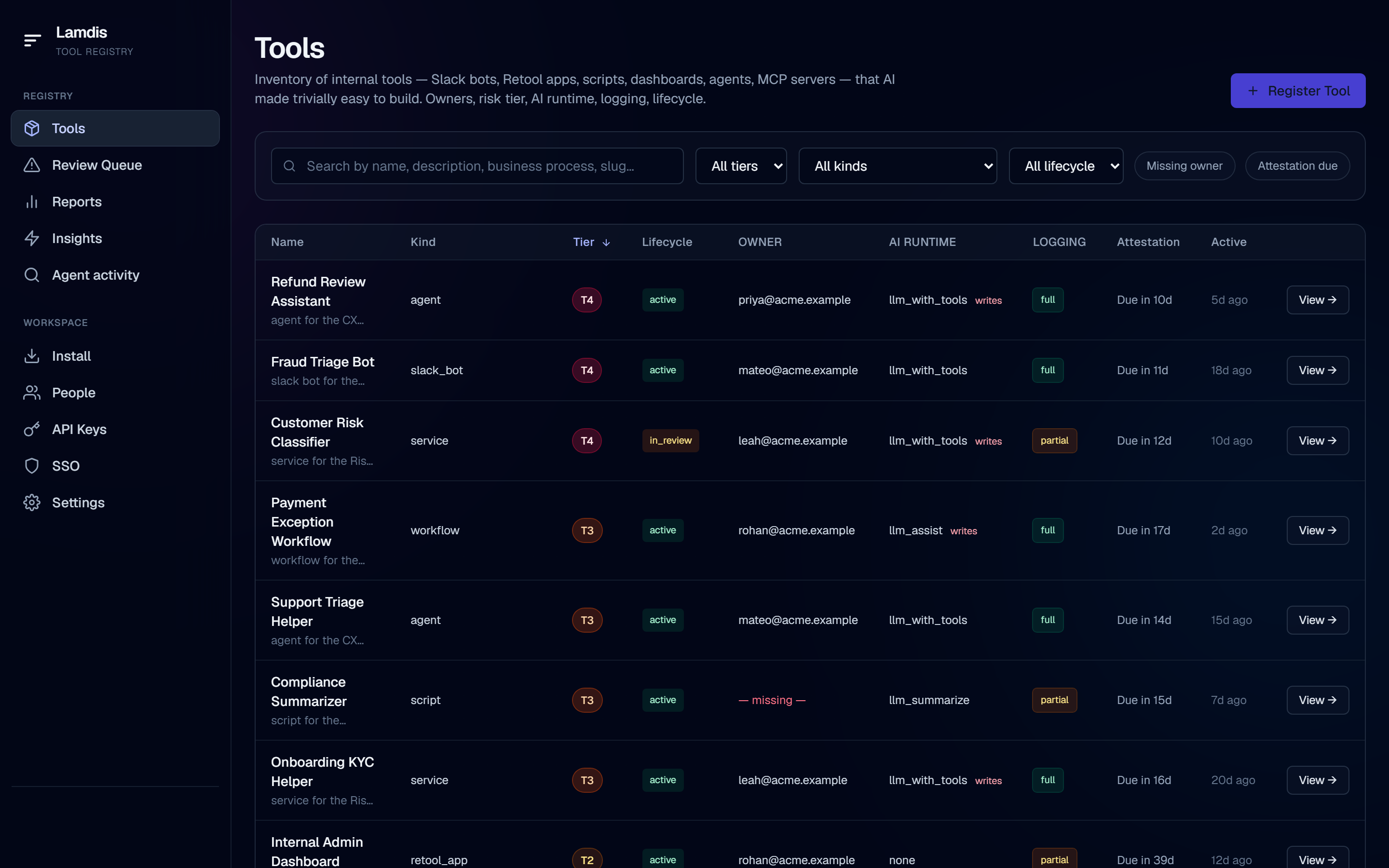

The platform

The living registry behind the Review.

Lamdis connects to the places AI-built tools emerge, so your inventory doesn't depend on quarterly interviews, stale spreadsheets, or someone remembering to update a service catalog. The Review gives your team the first trusted baseline. The platform keeps it current as new tools are created, changed, attested, reviewed, or retired.

Direct registration, connector-based discovery, owner attestation, and reviewer workflows — so the registry stays current without depending on any single source.

Connects where tools emerge

New tools can register as they're built — through agent workflows, MCP servers, repos, Slack, Retool, internal dashboards, scripts, and AI proxies. Not weeks later when someone gets around to it.

Keeps the baseline current

Detection, registration, classification, and ownership tracking continue after the Review. Owners attest on cadence. Reviewers tier new categories. Tools get retired when they should.

A defensible inventory, always

When leadership, audit, security, or compliance asks "what AI-built tools are running here, who owns them, and how risky are they?" — your team has the answer in the platform, not in a stale PDF.

Inside the platform

Three views built for weekly review.

The platform is where the joint work lives between engagements — and where the registry stays current on its own.

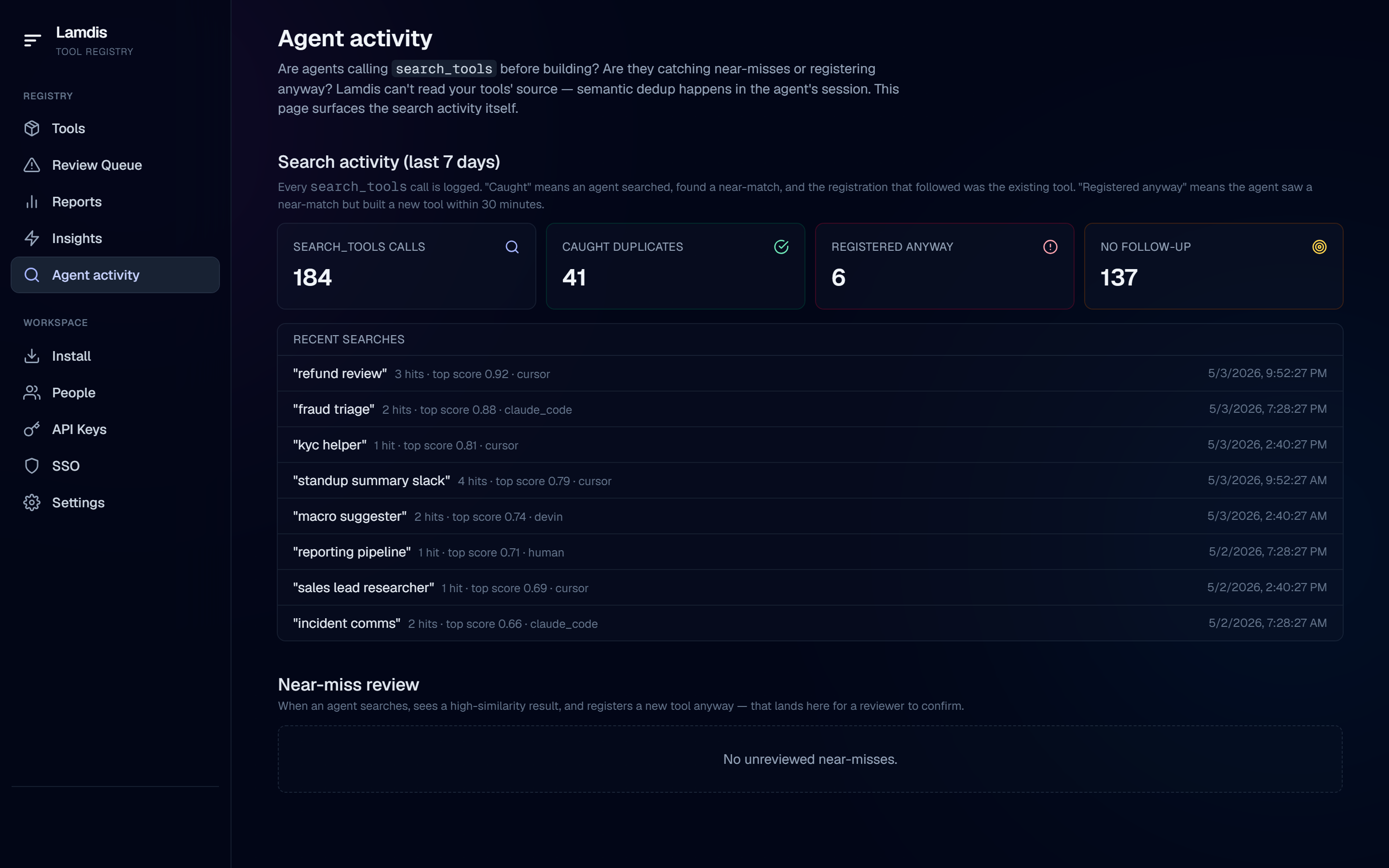

Activity

See what your teams are actually building, in real time.

A live view of the tools people are searching for, extending, and creating across the company. What problems are showing up most often, which teams are reaching for the same thing twice, where new categories are emerging — the signal leadership has never had before. The first place to look when someone asks "what are our teams building with AI right now?"

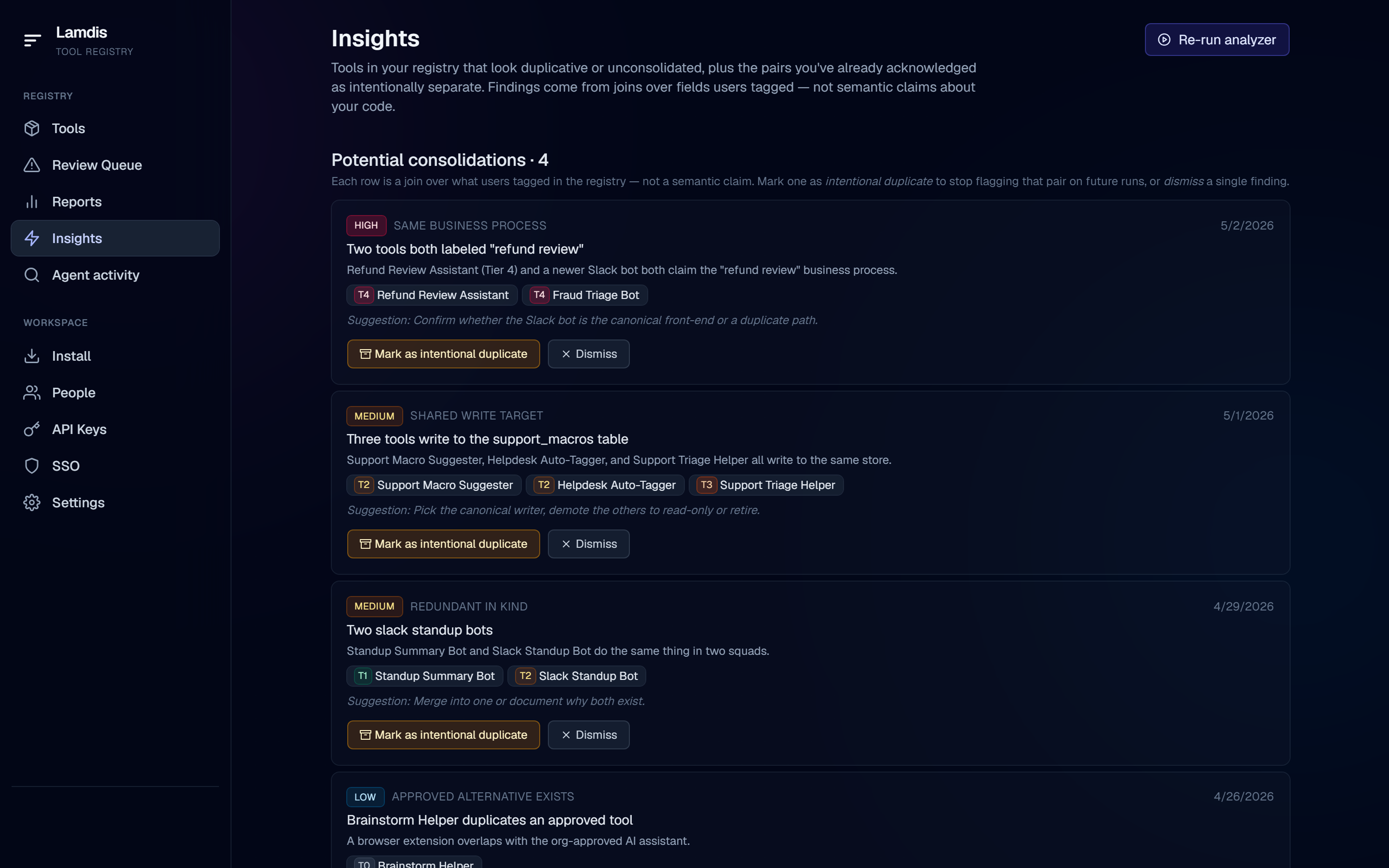

Insights

Catch duplicates and consolidation opportunities automatically.

Joins over what teams have actually registered — same business process, shared write target, redundant in kind, approved alternative exists. Acknowledge intentional duplicates so they stop flagging. The platform watches for sprawl so your reviewers don't have to spot it manually.

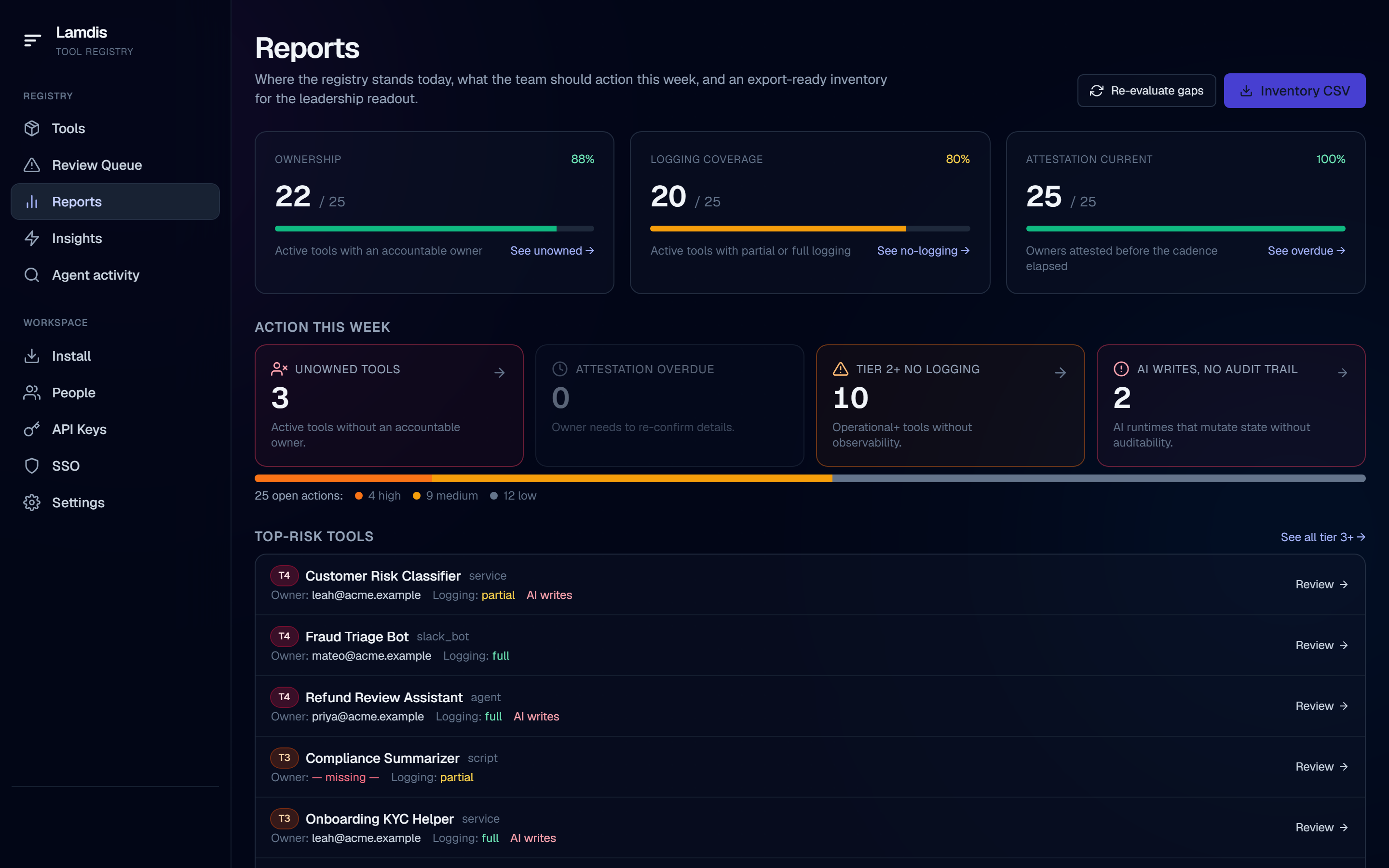

Reports

The leadership readout, always current.

Ownership coverage, logging coverage, attestation status, and the gap report — open actions ranked by severity. Top-risk tools surface on the same page. Generated from the live registry, so the version leadership reads is the version that matches reality today, not the snapshot from a quarterly audit.

The Ongoing Partnership

A platform that keeps watching. A team you can call.

AI-tool governance barely exists as a discipline. Customers don't have a head of it — yet. After the Review, the Lamdis platform keeps detecting and tracking new tools while we stay on call: recalibrate tiers as new categories show up, advise on emerging patterns, and bring the cross-customer view back to your environment. The platform does the detection. The relationship keeps the model honest.

Quarterly recalibration

Tier review when new classes of tool emerge (today it's MCP servers and agentic workflows; next quarter it's something neither of us has seen yet). Updated operating model.

New-tool advisory channel

An open channel for "this new thing — how should we tier it?" Your reviewers stop guessing in isolation. Decisions are documented and re-used across teams.

Registry stays true

We keep the inventory current as the landscape shifts. Coding agents register new tools via MCP as they're built. Owners attest. Reviewers update tiers. Leadership pulls readouts.

Cross-customer signal

How AI-tool sprawl plays out across our engagements — which patterns turn load-bearing, which Slack-bot designs become incidents, where regulators are starting to lean. Industry signal, applied to your environment.

Engagement Options

Two ways to work with us. Both custom-priced.

Pricing is sized to the scope of your environment. We scope it together on the first call so the engagement matches what your team actually needs.

Initial Review

Scoped engagement

For teams that want a baseline they can trust.

- Inventory of AI-built internal tools

- Risk tiering (Tier 0–4) with reasons

- Ownership and gap report

- Operating model and review pathway

- Prioritized remediation backlog

- Executive readout

- Lamdis registry setup — your team takes the keys

Ongoing Partnership

Annual retainer

For teams that want to keep the work from going stale.

- Quarterly recalibration and tier review

- Open advisory channel for new tools and emerging categories

- Registry maintenance and remediation tracking

- Leadership reporting on a cadence

- Continued platform access for your team

- Cross-customer pattern signal

Platform-only access available on request for teams that already have an internal governance process.

Positioning

What this is. What this isn't.

What this is

A living registry and expert review process for AI-built internal tools. A scoped Review baselines what your teams have shipped; the platform keeps it current as new tools, agents, and workflows appear.

Software-enabled review and ongoing governance. The Review establishes the operating model. The platform keeps the registry current as new tools, agents, and workflows appear. Operational, engineering-native, tied to outcomes the business already cares about.

What this isn't

- Not a replacement for your service catalog (Datadog, Backstage, Cortex, OpsLevel).

- Not a CMDB or ITSM (ServiceNow, Jira Service Management).

- Not a pure AI agent governance platform — agents are one kind of registered tool, not the only one.

- Not a one-off audit that delivers a binder.

- Not a self-serve dashboard with no operating model.

- Not a code scanner.

- Not an AppSec replacement.

- Not a model-risk platform.

Who It's For

Built for the teams that have to live with the answer.

Engineering leaders

Know which AI-built internal tools have become real operational dependencies — before they become outages, maintenance traps, or hidden risk.

Security & AppSec

Separate low-risk AI usage from tools that actually need security review. Less noise, better prioritization, clearer data exposure map.

Compliance & risk

Identify AI-assisted workflows that affect customer outcomes, regulated processes, or evidence trails. Better audit readiness, clearer human oversight.

Business operations

Keep useful AI-built tools alive without bureaucracy. Teams keep moving, useful internal tools get legitimized, business owners understand responsibilities.

Think this is happening in your org?

Engineering and security leaders at companies aggressively adopting AI: send a short note and we'll set up a conversation. Blunt takes welcome.

Or copy hello@lamdis.ai — whichever is easier.